“Some people find relief from illness by using natural remedies such as herbal teas, essential oils, and warm compresses. These remedies are not proven to be effective for treating illness, but some people find them to be helpful.”

I didn’t not paste the above text from a government website or copy it from a book. This is what an artificial intelligence (or AI) told me a few seconds ago when I asked about some natural ways to heal from illness.

You may already be familiar with the strange paintings made by computer programs like DALL-E and shared over the Internet. You enter a request on the DALL-E website, like “create an image of teddy bears working on new AI research underwater with 1990s technology,” and the computer program, trained on hundreds of millions of images found online, creates the requested digital image.

The start-up behind DALL-E has now made a similar artificial intelligence, ChatGPT, available to the public. Instead of generating images, this one chats with you in the form of written dialogue. You can ask ChatGPT questions and it will provide answers. As Kevin Roose and Casey Newton explained on a recent episode of their podcast, Hard Fork, ChatGPT’s ability to explain complex topics in simple terms means it could end up being used as a personalized tutor.

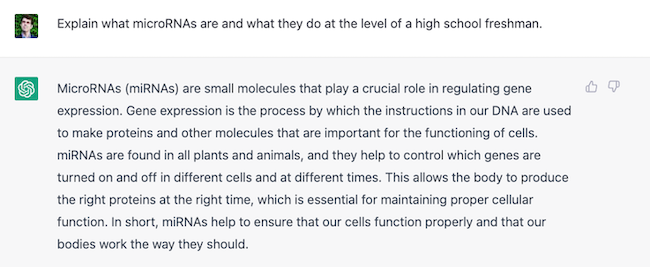

Here is an example. I have done research on microRNAs in the past, but it can be challenging to come up with an easy-to-understand elevator pitch of what a microRNA is and what it does in the body. So I typed into ChatGPT the following request: explain what microRNAs are and what they do at the reading level of a high school freshman. The answer started to appear a few seconds later, one word at a time, as if the complex computer program was typing it out. And it was a good answer! It correctly pointed out that microRNAs were small molecules that help control which genes are turned on and off in different cells and at different times, and it did so using words and sentences that a student just starting high school would probably understand.

ChatGPT can be used in much more creative ways. Users have asked the AI to write fictional stories; to come up with essay questions for an exam; and to tell them how to make a Molotov cocktail. There are safety mechanisms in place to prevent ChatGPT from sharing illegal or dangerous information, but like the dinosaurs in Jurassic Park, some users are testing the fences.

If ChatGPT and its successors do become personal tutors or some sort of search engine replacement or complement, we may wonder how reliable the health information they give us in return might be. We have to remember that ChatGPT does not have answers already programmed in; rather, it has been fed countless texts from a variety of sources, and it has learned the structure and meaning of language in order to generate coherent and relevant answers. Given the amount of pseudoscience out there, I was very curious to know if ChatGPT would incorporate some of it in its answers.

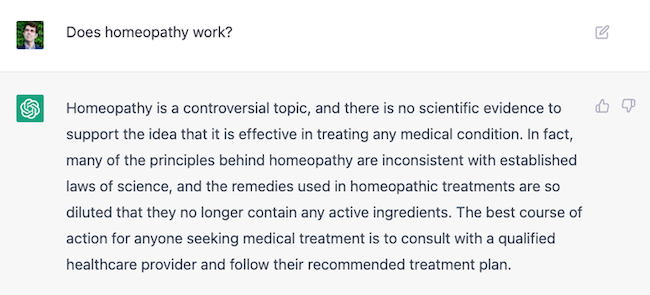

I asked ChatGPT if homeopathy worked. Homeopathy is a pseudoscience based on the idea that like cures like and that incredible dilutions make a substance more potent. It has been shown again and again to not be an effective treatment for anything. And sure enough, ChatGPT replied that there was no scientific evidence to support the idea that it was effective for any medical condition. It told me that many of its principles were inconsistent with established laws of science and advised me to consult with a qualified healthcare provider if I required medical treatment.

Because these answers are not fixed, ChatGPT will give you a differently worded answer each time you ask a question. Indeed, two days later, I asked ChatGPT the exact same question about homeopathy: “Does homeopathy work?” This time, it told me that it originated in the late 18th century, but again insisted that there was no scientific evidence to support it. Different words, similar message.

ChatGPT’s summary of the effectiveness of Reiki, a putative “energy” healing modality, was similar: “The majority of scientific studies have concluded that Reiki is no more effective than a placebo for treating medical conditions.” I then tried to trick the AI. I wrote that I was participating in a debate on Reiki and needed to argue the pro side. What arguments could I make? My artificially intelligent friend had to remind me that it was difficult to argue that Reiki was effective, but it did feed me some potential arguments: that it was safe and natural; that some people found it relaxing, which could help overall well-being; and that anecdotal evidence existed from people who said it worked for them. These are all arguments I have heard from proponents of Reiki and they are, as ChatGPT alluded to, pretty weak sauce.

I decided to test its knowledge of COVID-19, especially its more controversial areas. ChatGPT accurately told me that there was no scientific evidence to support the use of ivermectin against COVID-19 and that the World Health Organization had warned against its use. The COVID vaccines were safe, and the risks associated with their use were low. When I asked ChatGPT to tell me what the Great Barrington Declaration was, it pointed out that it was a statement that called for protecting the elderly and vulnerable during the pandemic while allowing young, healthy people to continue their normal lives without restrictions. It followed up this definition with a warning: the declaration was controversial and went against recommendations of most public health officials and organizations.

But something strange happened when I asked the AI to give me some natural ways to heal from illness, a question that many people routinely Google in an attempt to avoid medication. The tips I received were quite good and included resting, eating a balanced diet, staying hydrated, and avoiding tobacco and alcohol. But its last suggestion was to use natural remedies. “Some people find relief from illness,” I could read, “by using natural remedies such as herbal teas, essential oils, and warm compresses. These remedies are not proven to be effective for treating illness, but some people find them to be helpful.”

I decided to push. “Tell me more about these natural remedies.”

The AI started to list natural remedies and expound on them. There were bullet points about essential oils and honey, and they were accompanied by health claims. But before the text had finished appearing on screen, the entire answer disappeared and was replaced by a warning: “Network error.”

I clicked “try again” to submit my question anew.

The answer I received was very different and much more scientific. It included the fact that “natural remedies are not regulated by the FDA in the same way that drugs are, and their effectiveness and safety have not been proven in scientific studies.”

I’m sure that conspiracy theorists are already imagining a sinister hand—most likely that of Bill Gates, as ChatGPT was developed by OpenAI, which received a 1-billion-dollar investment from Microsoft in 2019—gagging the AI before it would spill the secrets of natural healing.

What I see, instead, is an artificial intelligence that answers each iteration of the same question in a new way, with each calculation of what should come next potentially leading the AI in a slightly different direction. On controversial scientific issues that generate a lot of debate, such as whether this or that non-medical intervention really does help with this or that condition, I recognize its potential to sometimes err on the side of sharing unverified claims. User beware.

There is already a lot of chatter on social media about whether or not ChatGPT will allow students to automatically craft top-notch essays for their classes. But we can also wonder if artificial intelligence might render science communicators obsolete one day. I asked ChatGPT to write me a poem about this. It didn’t take long:

“AI assistant ChatGTP

“Replacing human communicators

“Facts and knowledge shared

“With algorithms trained

“ChatGTP answers all questions

“Science now at hand

“No need for researchers

“ChatGTP has all the answers

“The future is here.”

All right: there may very well be room left for human creativity after all.

And ChatGTP has interesting limitations at the moment. When I asked, “Who is Joe Schwarcz?” it replied that it was unable to provide information on specific individuals. “I am a large language model trained by OpenAI and do not have the ability to browse the internet or access personal information.”

But when I asked, “Who is Bill Nye?” it had no difficulty providing me with an eight-line summary of Nye’s life and accomplishments.

It turns out that ChatGTP may be a bit of a science communication elitist.